Diagnosing Gender Bias in Image Recognition Systems

Abstract

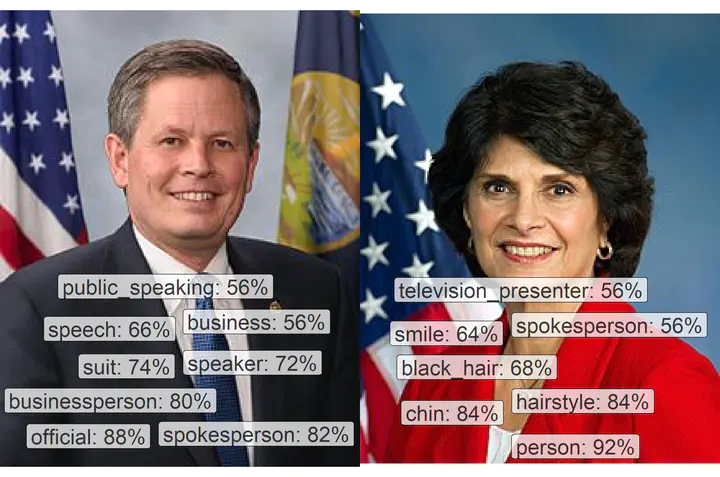

Images recognition systems offer the promise to learn from images at scale without requiring expert knowledge. However, past research suggests that machine learning systems often produce biased output. In this article, we evaluate potential gender biases of commercial image recognition platforms using photographs of U.S. Members of Congress and a large number of Twitter images posted by these politicians. Our crowdsourced validation shows that commercial image recognition systems can produce labels that are correct and biased at the same time as they selectively report a subset of many possible true labels. We find that images of women received three times more annotations related to physical appearance. Moreover, women in images are recognized at substantially lower rates in comparison to men. We discuss how encoded biases like these affect the visibility of women, reinforce harmful gender stereotypes, and limit the validity of the insights we can gather from such data.